The OWASP LLM Security & Governance Checklist which is the industry’s closest thing to a canonical standard, has evolved significantly for 2026. It no longer treats governance as a set-and-forget configuration. It demands proactive, real-time controls capable of handling autonomous agents, complex data supply chains, and third-party model dependencies. What these checklists don’t tell you is how to operationalize any of its components at scale.

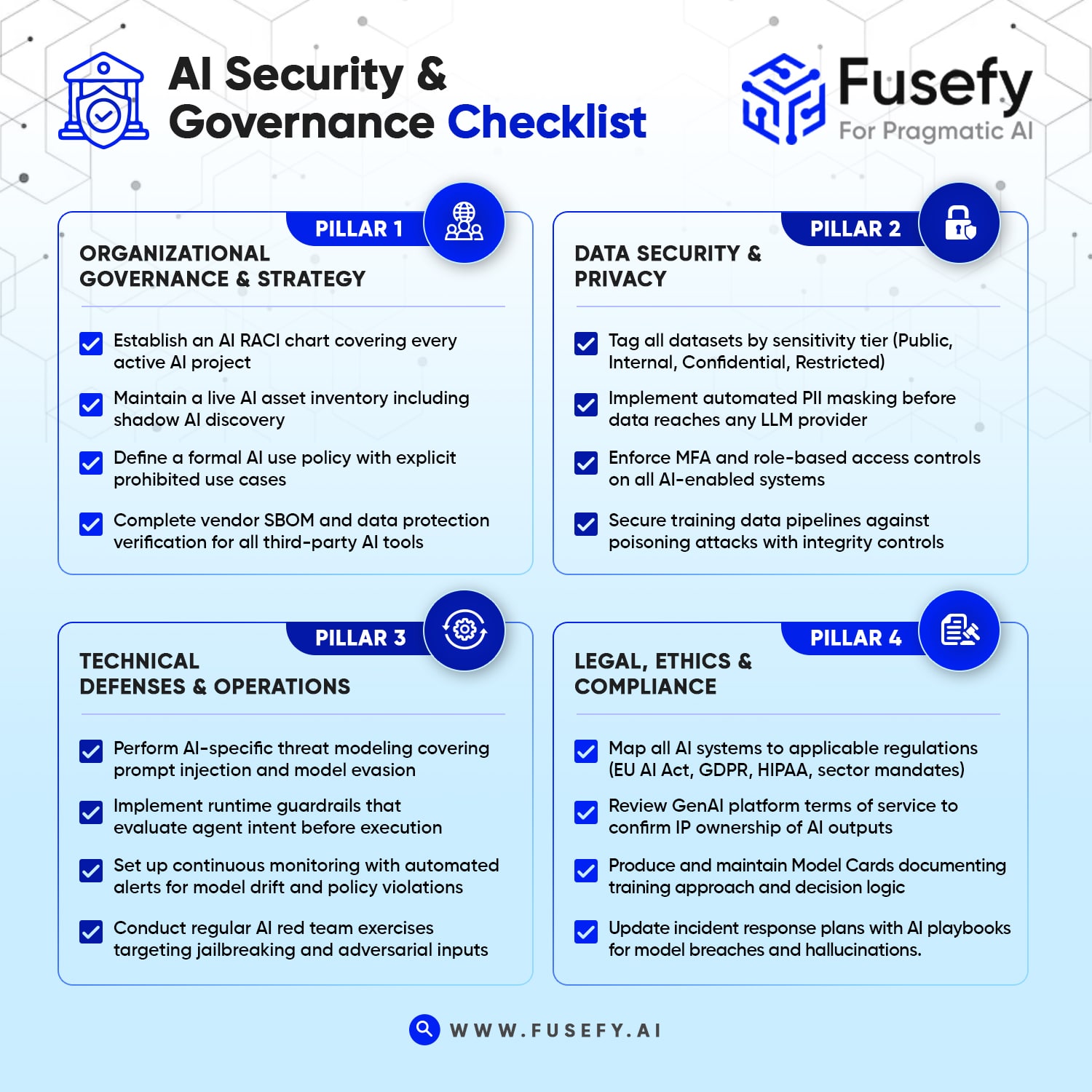

This blog walks through the four pillars of AI Security and Governance: Organizational governance & strategy, Data security & privacy, Technical defenses & operations and Legal, ethics & compliance, what they require, why they matter, and how to turn checklist intentions into enforceable reality.

Organizational governance & strategy

Every AI initiative inside an organization requires or starts with the explicit definition of ownership. The core requirements here are deceptively simple. Start by establishing an AI RACI chart that defines who is Responsible, Accountable, Consulted, and Informed for every AI project. Then comes the AI Asset Inventory which is a live catalog of every model, API, and AI-enabled tool in use, including shadow AI that engineering teams have adopted without formal approval. Also, we develop a formal AI Use Policy that specifies what is and isn’t permitted (for example, prohibiting the use of public LLMs for proprietary source code). And for any third-party AI vendor, it is mandatory that we request their Software Bill of Materials and verify data protection compliance before integration.

Data security & privacy

This is where AI governance intersects most directly with existing data protection obligations and where the stakes are highest. An LLM that receives PII it shouldn’t have seen, or that was fine-tuned on data that was later poisoned, creates liability that spans GDPR, HIPAA, and increasingly the EU AI Act.

The checklist here requires data classification : every dataset tagged by sensitivity tier (Public, Internal, Confidential, Restricted) with access controls enforced at the model level. It demands prompt and output filtering that automatically masks or redacts PII before data reaches any AI provider. It calls for least-privilege access with MFA and role-based controls on all AI-enabled systems. And critically, it requires training pipeline security protecting the data used to fine-tune models from poisoning attacks that can corrupt model behavior at the source.

Technical defenses & operations

This is the Security pillar that has changed most significantly in 2026. The rise of autonomous AI agents has introduced an entirely new class of risk where they take actions on a user’s behalf and this requires controls that evaluate intent and authorization before execution.

Beyond agents, the checklist requires AI-specific threat modeling for GenAI-accelerated attacks like hyper-personalized phishing, prompt injection, and model evasion techniques that didn’t exist in traditional threat models. It demands runtime guardrails that monitor agent behavior in real time and calls for continuous monitoring with automated alerts for model drift, unusual token usage patterns, and repeated policy violations. Also, it mandates regular AI red team exercises specifically designed to test jailbreaking, evasion, and adversarial input techniques.

Legal, ethics & compliance

The regulatory environment around AI has hardened considerably. The EU AI Act moved from guidance to enforcement this year. GDPR enforcement bodies have started scrutinizing LLM data flows specifically. HIPAA guidance has been updated to cover AI-generated clinical content and intellectual property questions around AI outputs like who owns them, who is liable for infringement ,are now live legal issues.

The checklist here requires regulatory mapping that traces AI systems against applicable laws by jurisdiction and sector. It demands review of GenAI platform terms of service to ensure the organization retains ownership of AI outputs and isn’t exposed to copyright infringement liability and calls for Model Cards documenting how each model was trained and how it makes decisions . This forms the foundation of explainability requirements under multiple frameworks and it requires updating incident response plans to include AI-specific playbooks for events like a model breach, data exfiltration through a prompt, or widespread hallucination impacts on downstream systems.

How Fusefy helps Enterprises tick off the AI Security and governance Checklist

Fusefy automates the evidence layer across all four mentioned pillars of the OWASP checklist replacing manual reviews, self-reported inventories, and policy documents with continuous, code-grounded verification.

For organizational governance, it auto-discovers every AI service, API, and shadow tool in the codebase on every scan, links control gaps to code owners and commit history for live RACI tracing, and flags third-party integrations missing SBOM or data protection verification.

On data security, it verifies that PII masking functions are called consistently across every API entry point including edge-case branches and confirms data classification logic is enforced at the model level, validates that MFA and role-based access are implemented in code and not just declared in configuration, and scans training pipelines for integrity controls that guard against poisoning attacks.

For technical defenses, Fusefy runs LLM-based evaluations tuned specifically for GenAI threat vectors like prompt injection, jailbreak-prone input handling, and model evasion thus flagging agents that execute actions without intent or authorization pre-checks as high-confidence violations. Its AWS Step Functions architecture automatically re-runs affected controls whenever monitored files change, keeping drift detection current without manual intervention. Every finding is trust-adjusted by evidence quality so teams know not just what failed, but how much to act on it.

On the legal and compliance side, findings are mapped directly to EU AI Act, GDPR, and HIPAA clauses the moment a report is generated, every AI-generated finding is validated against actual code before surfacing to eliminate hallucinations, and model metadata is extracted from the codebase to support Model Card production. The result is an audit artifact that proves execution.

AI Governance Checklist

AUTHOR

Sivakumar Chellappa

With extensive expertise in Data, Cloud, Analytics and AI, Sivakumar Chellappa drives innovative data-driven solutions that bridge technology and business strategy