Anthropic Skills

By the end of 2025, Anthropic launched Claude Skills which are modular packages of instructions, scripts and domain expertise. “Skill” can be thought of as a specialized playbook. For example, a “Financial Reporting Skill” teaches Claude exactly how to format a balance sheet and what specific red flags to look for. Skills use progressive disclosure, loading only metadata initially, then full content on relevance in order to slash token costs, with sandboxed code execution and Markdown authoring for non-devs. They’re portable across Claude.ai, API, Code, and Agent SDK, enabling Git-based sharing enterprise-wide.

The thing that’s interesting to me about Skills is basically about agents… [letting organizations teach Claude to excel] in their specific context” rather than generic benchmarks.” — Brad Abrams, Anthropic’s product lead

Early adopters showcase transformative ROI using Skills. Rakuten, the Japanese e-commerce giant, integrated Skills for finance workflows, cutting reporting from days to 1 hour (87.5% faster). Box automates cloud storage tasks like Excel manipulation; Canva streamlines designs with brand compliance; Abrams demoed PowerPoint Skills generating “well-formatted slides that are easy to digest” for market analysis without extra prompting.

These use cases highlight Skills’ edge: eliminating prompt engineering cycles for repeatable, context-aware automation.

MCP: The Connectivity Standard

Complementing Skills is the Model Context Protocol (MCP), Anthropic’s open standard from late 2024, now under Linux Foundation’s Agentic AI Foundation since December 2025. Hosts like Claude Desktop connect to MCP servers exposing tools, resources, and prompts through JSON-RPC over HTTP/SSE or STDIO, solving the NxM integration problem for LLMs and external systems.

It supports discovery, bidirectional communication, elicitation for user input, and secure roots for file access, with over 10,000 servers for GitHub, databases, Stripe and more.

Unifying the AI Ecosystem: The Power of MCP, ACP and A2A

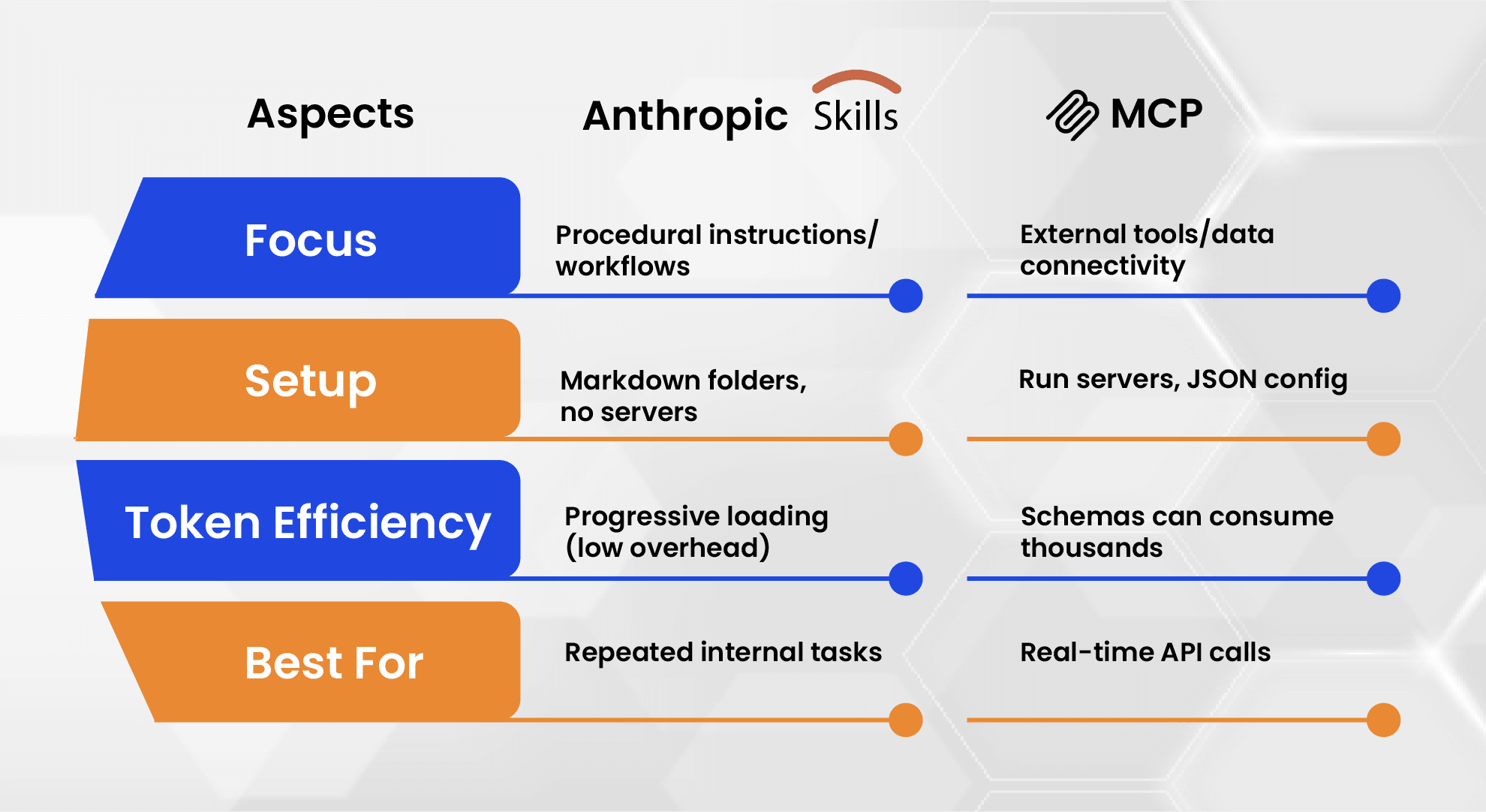

Anthropic skills Vs MCP

The distinction matters because of the following shifts that have occured since these two technologies merged

Shift 1: The End of “Context Bloat”

Before Skills, we had to paste massive system prompts into every chat. Now, through Progressive Disclosure, a Skill only loads into Claude’s “active memory” when the model detects that it’s actually needed. MCP also does the same for data. It only pulls the specific rows or files required, rather than the whole database.

Shift 2: “Vibe-Coding” vs. Engineering

Skills permit non-technical users to “program” Claude using Markdown and examples. MCP enables engineers to build robust, secure gateways to enterprise data. So, now a business analyst can use a “Market Research Skill” that leverages an “MCP Fetch Server” to crawl the live web, combining human-level strategy with real-time data.

Shift 3: Security & Portability

MCP servers are now part of the Agentic AI Foundation (AAIF) under the Linux Foundation. While “Skills” are often tailored to a specific user’s style, “MCP” is a global standard used by OpenAI and Google, too.

It is advisable that we choose Skills for quick, low-code standardization of internal processes and opt for MCP for plugging into live enterprise tools. When used in combination, MCP fetches the relevant data and Skills processes it compliantly.

Example: For supply chain triage, Skills ingest batched inventory data and apply firm-specific rules (e.g., custom alerts, branded outputs). MCP pulls live stock from PostgreSQL or Kafka first, perfect for volatile ops.

Scaling Through Modular Orchestration

For large firms, scaling would mean moving away from “all-in-one” prompts toward a Modular Agentic Architecture where MCP acts as the universal plumbing and Anthropic Skills serve as the specialized brainpower. Rather than building fragile, custom integrations for every department, enterprises are deploying a centralized MCP Gateway to standardize how AI models access internal databases and legacy APIs.

As these ecosystems mature under AAIF (Agentic AI Foundation) governance, we are seeing a massive shift toward “Git-shared” skill repositories. This collaborative approach is expected to reduce manual prompt engineering by over 80% within teams, as developers move away from writing long instructions and instead tap into modular, version-controlled libraries that Claude can call upon as needed.

Governance and the Future of Hybrid Agents

On the operational side, the future of enterprise AI relies on balancing autonomy with strict Enterprise Governance. With over 97 million SDK downloads, the demand for robust security specifically OAuth scopes and human-in-the-loop (HITL) triggers, is at an all-time high.

As the Anthropic platform currently limits active tools to 8 per request, firms are forced to become more efficient, using “Progressive Disclosure” to load only the most relevant skills into a model’s active memory. This may sound like a technical constraint but it actually drives better performance, enabling hybrid agents to handle complex, high-stakes tasks like Supply Chain AI and automated logistics.

By combining the “expert logic” of verified Skills with the secure “data reach” of MCP, firms can finally cut costs through automation without sacrificing the oversight and security required at the enterprise level.

AUTHOR

Sindhiya Selvaraj

With over a decade of experience, Sindhiya Selvaraj is the Chief Architect at Fusefy, leading the design of secure, scalable AI systems grounded in governance, ethics, and regulatory compliance.