Why August 2026 Is the Deadline That Actually Matters

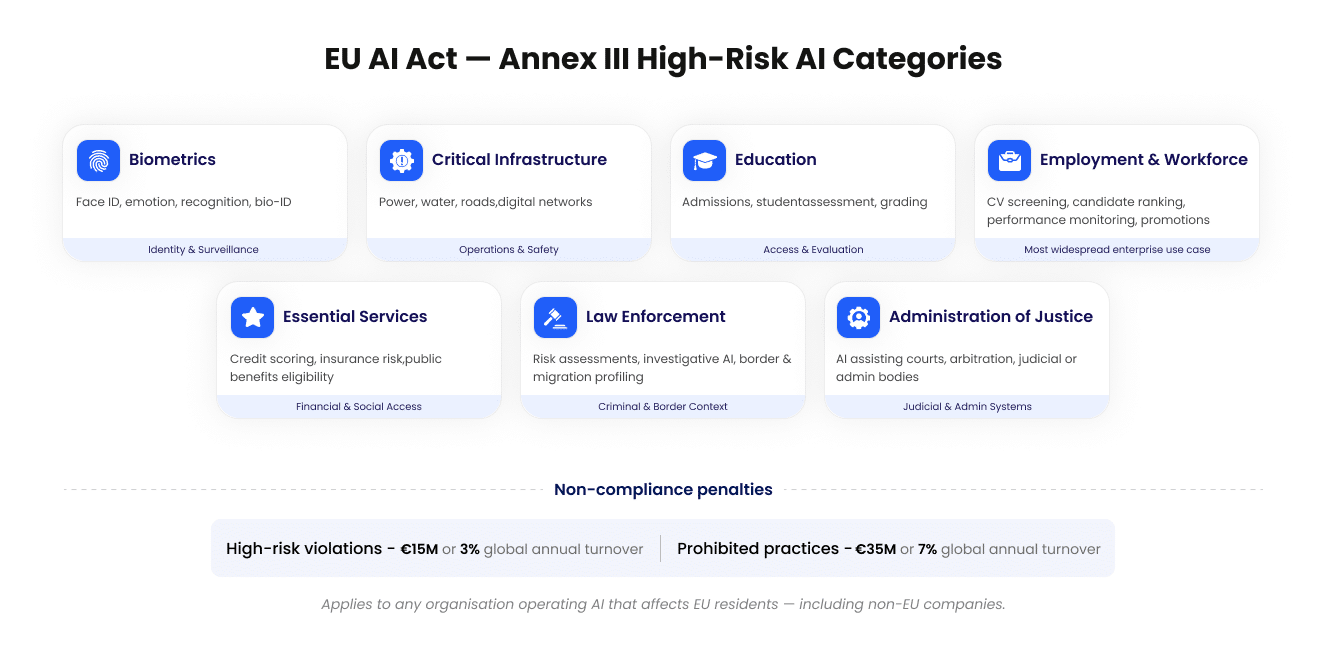

The EU AI Act has been rolling out in phases since it entered into force on August 1, 2024. Prohibitions on unacceptable-risk AI practices became enforceable in February 2025. Rules for General-Purpose AI (GPAI) models, like foundation models from major AI providers, kicked in during August 2025. But August 2, 2026 is when the bulk of the Act’s obligations become live covering the high-risk AI systems that sit at the heart of enterprise operations. These are the systems that decide who gets hired, who qualifies for credit, who gains access to benefits, and how critical infrastructure is managed. The Act treats these with the seriousness they deserve, and enterprises that ignore the deadline face fines of up to €15 million or 3% of global annual turnover, whichever is higher. Also, for prohibited practices, that ceiling jumps to €35 million or 7% of worldwide revenue.

There is one important caveat in the current regulatory environment which is the European Commission’s proposed “Digital Omnibus” package which floated in late 2025 that could push Annex III obligations for some systems to December 2027. The European Parliament has also voted in favor of delay. However, as of today, no formal agreement has been reached in the Council of the EU. The August 2, 2026 deadline remains the legally binding date under existing law, and compliance experts uniformly advise treating it as fixed. The Omnibus delay is not guaranteed and the EU AI Act is not retroactive, meaning systems already on the market before enforcement begins may benefit from grandfathering provisions. That alone is a reason to move now rather than wait.

What “High-Risk AI” Actually Means for Your Business

Annex III of the EU AI Act defines high-risk AI across eight categories. Enterprise teams need to map their AI deployments honestly against this list:

The Four Biggest Compliance Gaps Right Now

Research and legal analysis consistently point to the same organizational readiness failures heading into the 2026 deadline.

1. No AI inventory exists. More than half of organizations lack a systematic register of what AI systems are in production or development. Before you can classify risk, you need to know what you have. Every AI tool, model, and automated decision system needs to be catalogued , including those procured from third parties and embedded in enterprise SaaS platforms.

2. AI is being treated like traditional software. High-risk AI under the Act carries obligations that standard software procurement and agile development practices simply do not cover. Design history, data lineage, testing methodology, and bias documentation need to exist from the start.

3. Technical documentation is missing or inadequate. Annex IV specifies what technical documentation must cover: the system’s intended purpose, its design decisions, training data characteristics, performance metrics across different groups, and validation procedures. Organizations running lean development cycles with minimal documentation will struggle to meet this standard.

4. Human oversight is nominal, not structural. The Act requires genuine human oversight meaning humans must be capable of understanding, monitoring, and intervening in AI-driven decisions. Building oversight into workflow architecture is fundamentally different from adding an approval checkbox to a dashboard.

What Enterprise Teams Must Do Before August 2, 2026

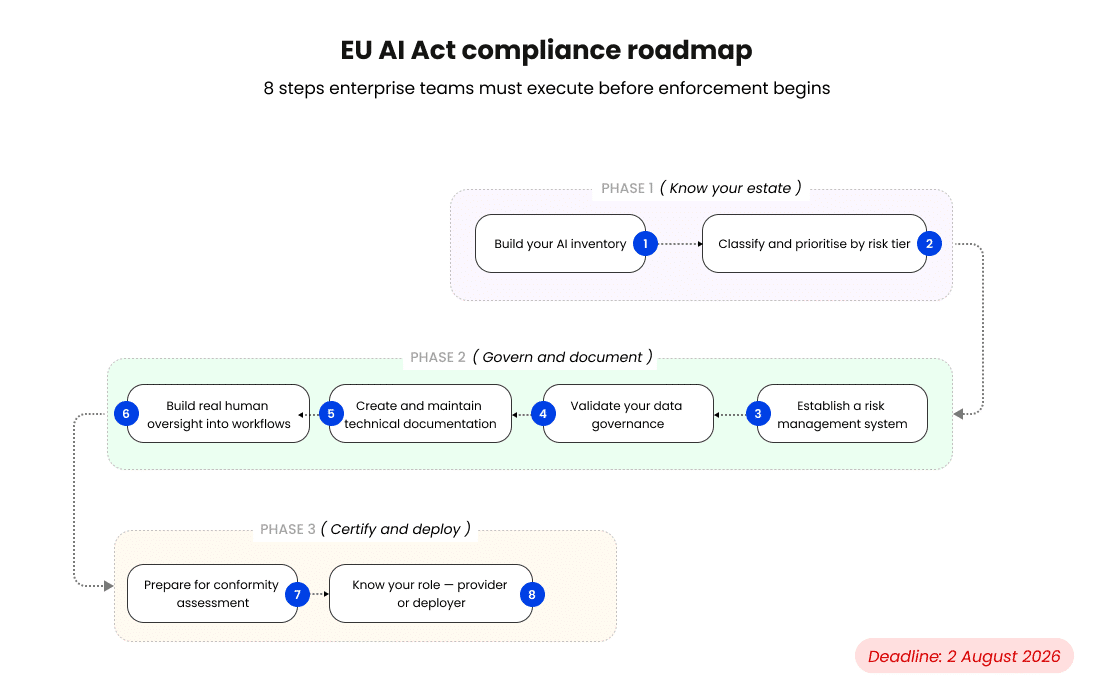

There is no shortcut to compliance, but there is a clear sequence of actions enterprise teams should be executing right now.

Step 2: Classify and prioritize by risk tier. Once you know what you have, map each system against the prohibited, high-risk, limited-risk, and minimal-risk categories. Focus your compliance investment where the regulatory exposure is highest. High-risk systems under Annex III need the full treatment.

Step 3: Establish a Risk Management System. The Act requires a continuous risk management process for high-risk AI .This means documented risk identification, evaluation, and mitigation procedures that are updated throughout the system’s lifecycle. This needs to be a living governance process, not a static report.

Step 4: Validate your data governance. High-risk AI systems must use training, validation, and test datasets that are relevant, representative, and free from known biases to the extent technically feasible. If your AI models were trained on data without documented provenance, quality metrics, or bias testing, that gap needs to be closed or formally assessed.

Step 5: Create and maintain technical documentation. Every high-risk system needs comprehensive technical documentation before it is deployed. For systems already live, this means reconstructing the documentation retroactively which is a resource-intensive process that becomes harder the longer it is deferred.

Step 6: Build real human oversight into your workflows. Assign named individuals as human oversight owners for each high-risk system. Define the conditions under which a human must review, override, or halt AI-driven decisions and document those intervention points explicitly.

Step 7: Prepare for conformity assessment. Depending on the sector, providers of high-risk AI may need to self-certify compliance or engage third-party assessment. For systems used in critical infrastructure, employment, education, and essential services, a formal conformity assessment under Article 43 is required before the system can legally operate in the EU.

Step 8: Know your role — provider or deployer. The Act regulates differently depending on whether your organization develops AI (provider) or deploys AI built by others (deployer). If you license a third-party model and integrate it into your platform without significant modification, you are likely a “deployer.” If you substantially modify a model or market it under your own name, you may be reclassified as a “provider” with significantly greater obligations.

The Strategic Framing: Compliance as Competitive Advantage

There is a temptation to treat the EU AI Act as a cost to absorb and a compliance exercise to complete. That framing misses the bigger picture.

The enterprises that build governed, documented, auditable AI systems are the ones that will win enterprise contracts in regulated industries, secure procurement approvals in public sector deals, and earn the trust of customers increasingly sensitized to how AI affects their lives. The August 2026 deadline converts responsible AI practices into legal requirements but organizations that have been doing this work already are about to gain a meaningful market differentiation advantage over those that have not.

The groundwork for EU AI Act compliance : risk management, data governance, human oversight, technical documentation , is the same groundwork for building AI that works reliably and earns institutional trust.

Don’t wait for the Omnibus. Start your compliance clock now.

The legislative uncertainty around the Digital Omnibus delay is real but waiting for it is the wrong posture for any enterprise serious about AI governance. If the delay passes, the compliance work done between now and August will not be wasted; it will simply accelerate an obligation that arrives in any case. If it does not pass, organizations that assumed it would are left scrambling in a window measured in weeks. The smarter bet is to treat August 2, 2026 as fixed, execute the compliance roadmap now, and monitor the legislative process in parallel.

Fusefy is the AI GRC command center built for exactly this moment. From automated AI inventory and risk classification to Annex IV documentation, human oversight tracking, and conformity readiness ,every step of the compliance roadmap runs through a single platform. If you’re ready to map your AI systems against the EU AI Act, book your assessment call today!